Interceptors signal with each other by gestures. However, these gestures are not apparent due to interferences or poor lighting. For that purpose, an apparatus is needed to register the gesture and send it to the fellow interceptors. The alternative for gesture recognition under poor lighting scenario is using sensors for recognition which have been built to deliver effective results.

Understanding Hand Gesture Recognition

For example, a robot’s reaction to the hand posture signs presented by a human can be visually examined through a camera. The advanced algorithm facilitates the robot to recognise a hand dependency in the input image, as one of five possible directions (or counts). The command will then be applied as a command input for the robot to execute a certain action or execute a specified task. For example, a “one” count could indicate “move forward”, a “five” count could

System Composition

The specified gestures incorporate movements of fingers, wrist, and elbow. To recognise any modifications in them the developed device uses flex sensors which identify the measure by which it has been moulded at each of these joints. The apparatus was designed with an account for the effective gestures an Inertial Measurement Unit (IMU-MPU-9250) was used. The parameters used from the IMU are acceleration, gyroscopic acceleration with angles in all three axes. An Arduino* Mega was used to receive the signs from the sensors and transmit it to the processor.

Classification Of Gestures

The gestures can be clubbed under static gestures and dynamic Gestures. The characteristics primarily used for static and dynamic gestures differ as static gestures use the flex sensors and consider the three-axis angles. On the other hand, dynamic gestures use flex sensors conditions, linear acceleration, gyroscopic acceleration, and the angles in all three axes.

Static Gesture Recognition

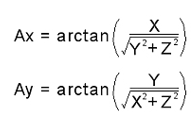

The fundamental step starts with calculating angles from the acceleration values using

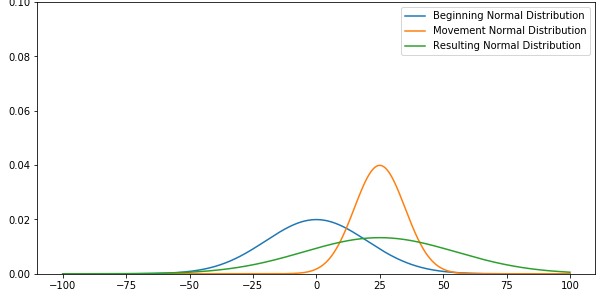

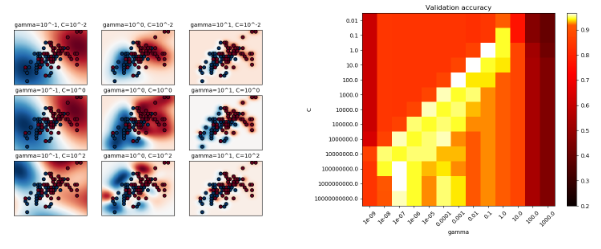

The angle values produce some noise and have to be refined out in order to get stable values out of it. Thus we have used a Kalman filter for infiltrating the values. Then both the flex sensor values and angles are supplied into a pre-trained Support Vector Machine (SVM) with Radial Basis Function (Gaussian) Kernel. And thus the output is obtained.

Dynamic Gesture Recognition

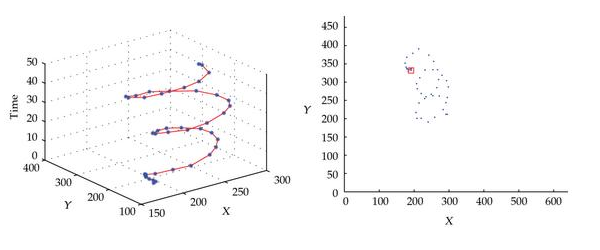

In Dynamic Gesture Recognition the angles, linear accelerations, and gyroscopic accelerations are refined using a Kalman Filter. The values are stored in a transient file with each line outlining one-time point. Once the values are stored every value is normalised column-wise. Then 50-time points are tested out of them followed by linearising them into one single vector consisting of 800 dimensions.

The result is filled into an SVM with Radial Basis Function kernel (Gaussian). The tested set consists of gestures like ‘Column Formation’, ‘Vehicle’, ‘Ammunition’, and ‘Rally-Point’ which are related to each other and results having similar features are grouped as one class. If the first SVM files into one of these groups then they are filled into another SVM which is trained just to analyse the gestures in that group.

In Conclusion

In today’s digitised world, processing activities have increased dramatically, with processors being advanced to the levels where they can support humans in complicated tasks. Yet, input

technologies seem to cause a major bottleneck in delivering some of the assignments, under-utilizing the accessible resources and limiting the expressiveness of application use. Computer Vision methods for hand gesture interfaces must excel current performance in expressions of robustness and agility to deliver interactivity and usability. To maintain the momentum it can be predicted that further research in the areas of feature extraction, classification systems and gesture representation are expected to complete the ultimate goal of humans interfacing with machines on their own natural duration.