The next interaction in a series of interviews for our theme this month — leading tools and techniques used by analytics and artificial intelligence practitioners — is with Ramasubramanian (Ramsu) Sundararajan, head of AI Labs and Tapan Khopkar, head of Innovation Labs at Cartesian Consulting. In the responses jointly curated by the two tech experts lead us through the commonly-used tools in analytics and AI, if researchers prefer for open source or paid tools, an inside of data science toolkit and others.

Analytics India Magazine: What are the most commonly-used tools in analytics, AI and data science?

SQL for basic data access. Much of what we work with is still in relational databases, and just getting the data out is key. For analysis after that, R or Python along with the contributed libraries largely cover what we need to do. For visualisation, Tableau is quite popular. Above all, never underestimate the power of MS Excel!

AIM: What is the most productive tool that you have come across?

It depends on the task. If the challenge is to derive simple insights from data, SQL along with a good visualisation tool like Tableau is often sufficient.

When it comes to data manipulation and model building, productivity is largely a function of one’s familiarity with the language, and the availability of libraries and contributed code. For instance, we find the R ecosystem to be quite rich when it comes to statistical modelling, while Python scores when it comes to deep learning, NLP etc.

AIM: Do you prefer tools that are open sourced or paid? Please elaborate on the benefits.

Here are the factors that play into this decision:

- The size of the user community largely determines how easy it is for people to get guidance, contributed code, tutorials etc. on a particular platform. By and large, user communities grow faster when the product is free; however, this is not always true.

- The traditional view used to be that, while open source grew faster, the product support was better for proprietary, paid software. With communities like StackOverFlow, GitHub etc., this is not quite as true as it used to be. However, this has more to do with the usage of the tool as it is, not so much the modification by the larger community. If you take an open source tool like Tensorflow, even though it’s open source, if there’s an issue with it, the large majority of users will wait for Google to fix it. In that sense, it’s not different from paid software.

AIM: What are the most common issues you face while dealing with data? How is selecting the right tool critical for problem-solving?

Data is rarely neat. When we study data analytics in college or during online courses, the story often starts where the data is clean and ready for the application of modelling algorithms. Even topics about data cleaning such as missing data handling are often handled perfunctorily. Real life problems come with data that is messy, incomplete, inconsistent, sometimes very large etc. Additionally, we don’t always have complete visibility into the data generation process and are therefore forced to rely on our assumptions while manipulating it.

If for a certain kind of problem, the chosen tool doesn’t provide the necessary functionality to make it easy for the analyst, then it’s a problem. Otherwise, it largely depends on the analyst’s familiarity with the tool. For instance, someone might be most comfortable using R along with the data.table library; for some others, it’s R with dplyr, and for yet others, it’s Python with pandas, etc.

For large datasets, other issues also play a critical part. Is there a latency time constraint both during development and/or deployment? Is the solution largely parallelizable? Is the solution like to involve data-parallel or compute-parallel operations? These aspects determine the choice of tool, and this choice becomes critical to productivity.

AIM: How do you select tools for a given task?

The critical factors, in no particular order, are:

- Functionality or versatility

- Scalability

- Availability of expertise

- Availability of contributed code and libraries

- Cost

- Performance

- Longevity of the platform

AIM: What are the most user-friendly languages and tools that you have come across?

Excel, RStudio and Tableau come to mind right away. For development in Java or C++, Eclipse is very easy to use.

AIM: What does an ideal data scientist toolkit like?

The ideal data scientist is someone who has sufficiently deep knowledge of enough tools or platforms so that he/she’s able to pick the right tool for solving parts of the problem, and then able to piece together a solution that may involve multiple platforms. Viewed through this lens, the ideal data scientist toolkit is better defined by what it is not. It is not a static toolkit.

AIM: What is the most preferred language used by the team?

Our team mostly uses Python for text and image datasets, and R for numeric datasets. For applications where scale is an issue, we use Java.

AIM: What is the most preferred cloud provider — AWS, Google or Azure?

We’re neutral on this point, at this point.

AIM: What are some of the tools used for scaling data science workloads; for eg., Dockers are gaining popularity vis a vis spark?

Scale isn’t one issue – it has many hues.

For instance, large batch processing of eminently parallelizable datasets is usually well-solved using map-reduce algorithms, whereas Scala does well in real-time scenarios. Large-scale computation in machine learning algorithms with inherent parallelisms, such as neural networks, is now often done using GPUs. And sometimes, the answer to the scale problem is to simply rewrite the code in C++ or Java instead of in Python or R – one doesn’t always need to throw a bigger computer or a parallel computing framework at the problem, just a better algorithm in a different language.

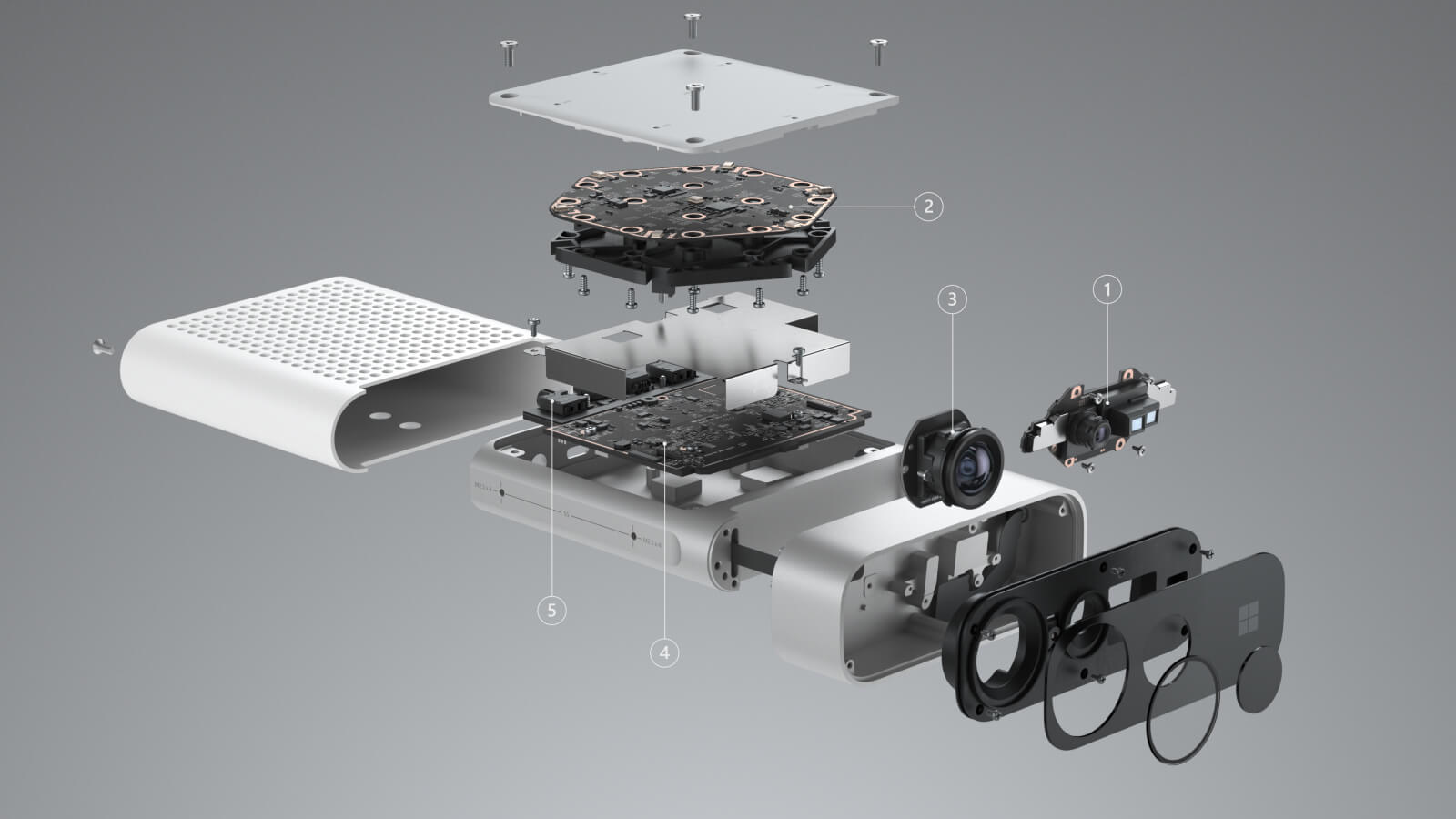

Further, scale issues look different at runtime. How quickly is the data to be processed and acted upon, relative to its speed of acquisition? Where does the solution need to reside – on the cloud or on an edge device with limited computing and memory capacity?

AIM: What are some of the proprietary tools developed in-house by the company?

Watch this space!