As artificial Intelligence is being implemented in almost all sectors of automation. Deep learning is one of the trickiest architectures used to develop and maximise the efficiency of human-like computers. To help the product developers, Google, Facebook and other big tech companies have released various frameworks for Python environment where one can learn, build and train diversified neural networks.

Google’s TensorFlow is an open source framework for deep learning which has received popularity over the years. With the new framework, PyTorch is receiving loads of attention from beginners because of its easy-to-write code. PyTorch is developed based on Python, C++ and CUDA backend, and is available for Linux, macOS and Windows.

There are a few differences between these two widely-used frameworks, maybe because of their way to implement code, visualisation techniques and static and dynamic programming.

Let us take a look at the differences:

Implementing The Code

When initialisation, assigning and building graphs on PyTorch follows a dynamic computation graphical approach. Users who are familiar with mathematical libraries in Python will find it easy since one does not have to scratch their head to build the graphs. You can directly write the input and output functions the way you want, without worrying about dimensional tensors. With the CUDA support, this makes life so much easier.

Whereas in TensorFlow, one has to work on building the dimensions of the tensor (graph) as well as assigning the placeholders for the variables. Once this is completed, a session has to be run in order to work out all the computations. Such a pain, isn’t it?

For example, tf.Session() is used to build a session, tf.Variable() is used to assign the weights to a variable and so on. Once this is initialised, one can build a neural network for training in TensorFlow.

Documentation

The documentation for PyTorch and TensorFlow is widely available, considering both are being developed and PyTorch is a recent release compared to TensorFlow. One can find a high amount of documentation on both the frameworks where implementations are well described.

Lots of tutorials are available on both the frameworks, which helps one to focus on learning and implementing them through the use cases.

PyTorch and TensorFlow tutorials can be found here.

Device Adaptation

PyTorch and TensorFlow both have GPU extension available. The main difference between these two frameworks is that when considering GPU for TensorFlow computation, it consumes the whole memory of all the available GPU. This can be avoided by assigning the right GPU device for the particular process. tf.device() gives you an option to select the preferred GPU. As TensorFlow is a static computation graphical approach, it is easy to optimise the code on this framework.

On PyTorch the variables can be assigned with the weights and run at the same time, where the framework builds the graph required for computation. The GPU usage on this is already enabled with CUDA installation, where the PyTorch always tries to find the GPU to compute even when you are trying to run it on a CPU. Hence X.cpu() extension has to be provided to run it on the CPU.

On TensorFlow tf.device(/cpu:0): argument is used to run it on the CPU. One can also opt the GPUs with tf.device(/gpu:0) to opt the first GPU or tf.device(/gpu:1) to opt the second GPU.

Model Visualisation

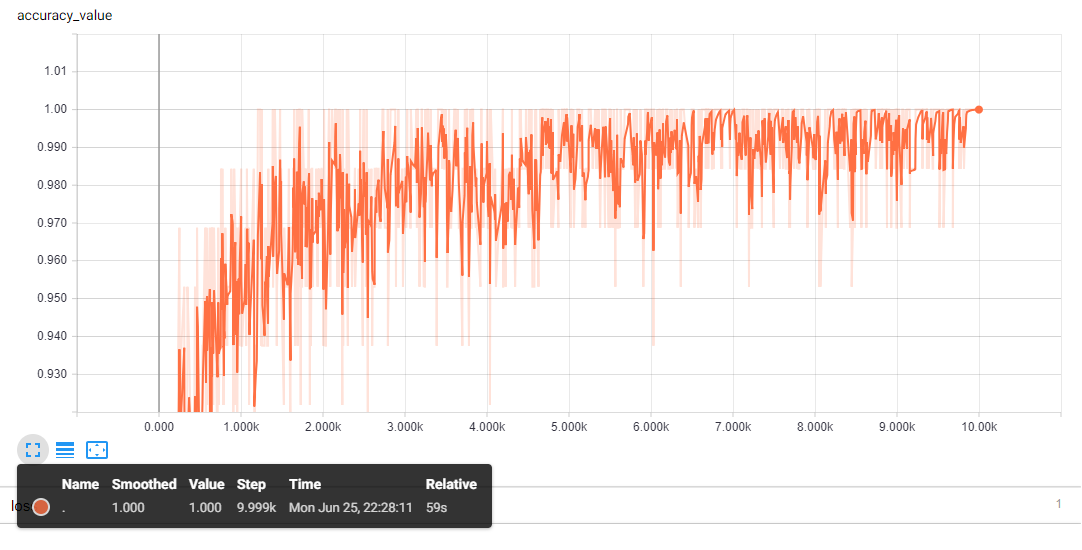

Visualisation is key to understanding the performance and working of the models. TensorFlow has this functionality of real-time representation of the graphs and the models called TensorBoard, which comes in very handy. Here, one is not only able to get a pictorial representation of the neural network but also the loss and accuracy graphs in real time which depicts how the precise the model is at a particular iteration. Here is are some example,

This is a real-time analysis where TensorFlow excels compared to PyTorch, which lacks this feature altogether. You can also visualise a flowchart of the neural network including the audio files if present in your data, which is pretty awesome.

Conclusion

In the future, PyTorch might have an addition of the visualisation feature just like TensorBoard. PyTorch is gaining popularity just because of its dynamic computational approach and simplicity. Beginners are advised to work on PyTorch before moving on to TensorFlow which helps them to focus on the model rather than spending time on building the graph.