The Artificial Intelligence misinformation epidemic centred around brains working like neural nets seems to be coming to a head with researchers pivoting to new forms of discovery – focusing on neural coding that could unlock the possibility of brain-computer interface. Of late, there have been many multiple projects and a lot of ongoing research into replicating the human-like thought, mostly by hardware and software simulations of human brain structures. But the question here is — can one simulate the human brain? Are Artificial Neural Networks a good model of the human brain and most importantly — neural networks which are good devices for computation and can neural nets really imitate the human mind?

AI Brain vs Human Brain

To start with, neuroscientists explain the two big problems facing brain simulation — firstly, the human brain is extremely complex, with around 100 billion neurons and 1,000 trillion synaptic interconnections. And it is not digital; instead the brain relies on electrochemical signaling with inter-related timing and analogue components, the sort of molecular and biological machinery that neuroscientists are only beginning to unravel. Which means, despite repeated attempts, simulation of the human mind is complex—and goes exponentially beyond the current technological reach.

On the other hand, neural networks roughly approximate the structure of the human brain. A neural network architecture is arranged into layers, wherein each layer consists of many simple processing units — nodes — further connected to several nodes in the layers above and below. The data is fed into the lowest layer which is then relayed to the next layer. Unlike humans, artificial neural networks are fed with massive amount of data to learn.

While artificial neural nets were initially designed to function like biological neural networks, the neural activity in our brains is far more complex than might be suggested by simply studying artificial neurons. Neuroscientists indicate that real neurons do not arrive at an output by summing up the weighted inputs. Also, real neurons do not stay on until the inputs change and the outputs may encode information using complex pulse arrangements.

Are Neural Networks Imitations of Mind?

This paper published in the Journal of Computer Science & Systems Biology by Dr Gaetano Licata questions whether Artificial Neural Networks are actually a good model for the human mind. According to Dr Licata, the high complexity of the human brain makes it impossible to consider neural networks as good models for human mind; but that doesn’t mean they are not good devices for computation in parallel. Now, the human mind is the result of the biophysical structure of a nervous system in a body which evolved to survive in the environment, and the power of the human mind can be attributed to this long and hard evolution.

This is where the difference kicks in, the researcher notes:

a) The goal of Neural Networks is to mimic the behavior of brain and not the mind: It is here that the neural network strategy misses the yawning gap between brain and mind. Scientists are struggling to define what “thought” is — which means that the mind/brain translation problem will not be overcome until scientists come up with a clear definition of human thought, consciousness, perception and action as cerebral phenomena.

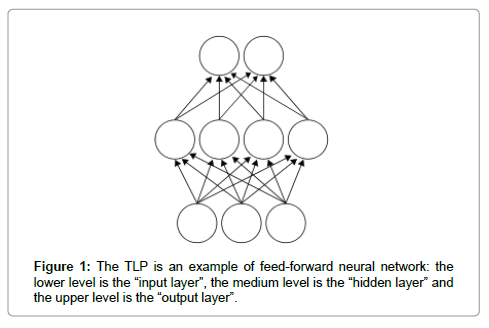

b) Artificial neural networks vs Human Brain in terms of architecture: Human brain is a “network” of 100 milliards of neurons wherein each neuron is connected to many thousands of other neurons, which means in a brain there are millions of connections. One of the most common kind of neural network architecture is the simple three layers structure of artificial neurons, like the three layers “perceptron” as shown below which is called the TLP architecture.

c) Now, neural networks are both feed-forward or feedback networks, emphasizes the paper: In feed-forward neural networks like TLP the information goes in one direction, from input layer to output layer through the hidden layer (that can be more than one), and there are no cycles. Meanwhile, in the feedback network (or recurrent networks) there are no input or output layers and all neurons are inputs and outputs units.

d) On the other hand, the human brain works like a feed-forward network with layers, but it has also many connections that lead the information backward to neurons of “preceding layer”, i.e. the brain is a feedback network in which can be many cycles of neurons.

e) Artificial feedback networks are unstable: According to the paper, artificial feedback networks can sometimes fluctuate and it can be very hard to obtain a stable output from a given input; so it is a mystery how our brain, as feedback network, is able to produce the output.

f) Neurons in a neural network are simpler than neurons in a human brain: According to this paper from DeepMind and University of Toronto’s researchers, simulated neurons have similar shapes, whereas the region of the brain that does the job for thinking and planning, has neurons which have complex tree-like shapes. Each cell has ‘roots’ deep inside the brain and ‘branches’ close to the surface.

g) Understanding how the brain solves the credit assignment problem: According to University of Toronto’s researchers a large gap exists between deep learning in AI and the current understanding of learning and memory in neuroscience. Neuroscientists lack a solution to the credit assignment problem. As per Yann LeCun and Yoshua Bengio’s research, “learning to optimize behavioral or cognitive function requires a method for assigning ‘credit’ (or ‘blame’) to neurons for their contribution to the final behavioral output”. Now, assigning credit in multi-layer networks is difficult, since the behavioral impact of neurons in early layers of a network depends on the downstream synaptic connections.

Outlook

Undeniably, AI has pervaded all aspects of our life and is the new normal. It has also revolutionized the industry by demonstrating human-level accuracy in tasks such as pattern recognition, image classification. There is also a growing body of research and applications that are normalizing AI into our daily lives and progress in self-driving technology, face and speech recognition signal the mass-market arrival of AI. However, despite the speed of advances the concept of “full-blown sentience is still a very long shot”.

University of Toronto’s Assistant Professor Blake A Richards noted in a journal about the upcoming interest in neuroscience, “The next decade will see huge volume of research neuroscience and AI, where neuroscience discoveries by scientists will put us on the path of new AI which will help us understand our experimental data in neuroscience”.