Artificial Intelligence has been mastering more and more games over the years now. For example, AI-powered Pluribus won against professionals in a game with six players at Texas Hold’em only last week.

Recently, scientists from the University of California, Irvine, developed an algorithm known as DeepCubeA which can solve Rubik’s cube with just 20 moves. The study was published in Nature Machine Intelligence earlier this month. The algorithm called DeepcubeA has beaten Feliks Zemdegs who solved the Rubik’s puzzle in a record of 4.22 seconds in the year 2018.

Pierre Baldi, a computer scientist from the University of California, Irvine, and one of the developers of the algorithm said that games like Go and chess have been already mastered by AI but Rubik’s cube is a complex puzzle to solve by a computer and with this latest algorithm, there will be a new advent in the field of AI deep learning systems which will be way more advanced than those which are currently available.

Baldi stated, “The solution to the Rubik’s cube involves more symbolic, mathematical and abstract thinking, so a deep learning machine that can crack such a puzzle is getting closer to becoming a system that can think, reason, plan and make decisions.”

Technology Behind

The algorithm behind DeepCubeA is a deep reinforcement learning approach which learns how to solve the increasingly difficult states in reverse from the goal state without any specific domain knowledge or information fed by humans. Deep reinforcement learning is basically the utilisation of deep neural networks in order to solve reinforcement learning problems.

One of the researchers told in an interview, “It is very unlikely that the machine could stumble upon a solution to the Rubik’s Cube, so what we did is we started from the goal — all sides having the same color — and worked backwards.”

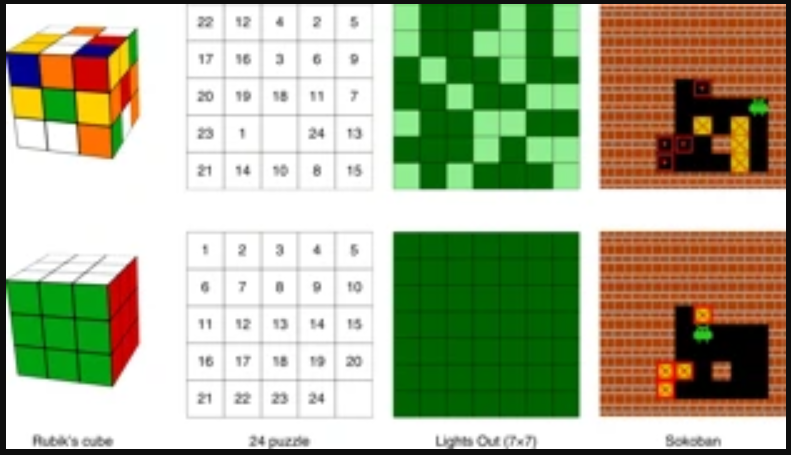

According to the researchers, the algorithm was fed with around 10 billion combinations of Rubik’s cube and a goal of decoding them in 30 moves. Not only Rubik’s cube, but this algorithm can also be used to solve other combinatorial games such as Lights Out and Sokoban.

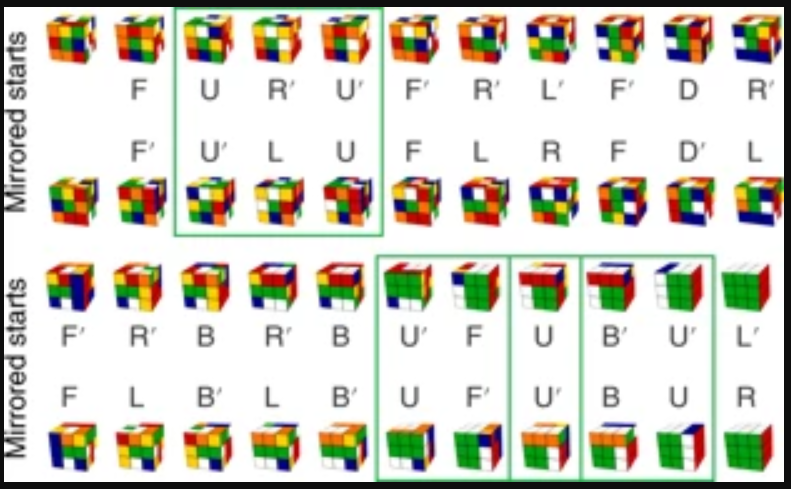

At the initial process, while training the model, DeepCubeA was made to solve the relatively easy Rubik’s cube i.e. a cube which had been scrabbled only for few times. After the algorithm learned how to solve the easy puzzles, the next step was to learn the harder instances. Thus by feeding the machine learning algorithm with many easy as well as hard instances, the algorithm learned how to solve a Rubik’s cube on its own regardless of the levels.

DeepCubeA takes mostly 20 moves to solve a cube for which most of the time it takes the shortest path. The algorithm successfully solves 100% of all the test configurations by finding the shortest path for most of the time. In fact, by searching the shortest path, the algorithm has successfully solved the 15 puzzle, 24 puzzle, 35 puzzle, 48 puzzle, Lights Out and Sokoban.

Previously, the researchers from Stanford University developed a similar approach to apply deep reinforcement learning for solving 222 Rubik’s cubes with feeding information from humans. This research is mainly based on solving the Rubik’s cube by developing and augmenting the Monte Carlo Tree Search (MCTS) algorithm. The researchers applied autodidactic iteration which is a reinforcement learning algorithm that is smart enough to learn how to solve a Rubik’s cube without any human guidance.