The advent of large datasets and compute resources made convolution neural networks (CNNs) the backbone for many computer vision applications. The field of deep learning has in turn largely shifted toward the design of architectures of CNNs for improving the performance on image recognition.

Poor scaling properties in convolutional neural networks (CNNs) make capturing long-range interactions for convolutions challenging, especially with respect to large receptive fields.

The problem of interactions has been tackled in sequence modelling through the use of attention. Recently, attention modules have been employed in discriminative computer vision models to boost the performance of traditional CNNs.

Overview Of Self-Attention

Attention was introduced for the encoder-decoder to allow for content-based summarization of information from a variable-length source sentence. Whereas, self-attention is defined as “attention applied to a single context instead of across multiple contexts”.

The idea here is to make machines learn the important features from looking at a few places in an image. This can be compared to how humans, in spite of having seen only a part of an object, can still identify it in different angles. For example, recognising a person from the back even though there was barely any observation made from back.

Self-attention is an instantiation of non-local means and is used to achieve improvements in the way we conduct video classification and object detection. Using attention as a primary mechanism for representation learning has seen widespread adoption in deep learning, which entirely replaced recurrence with self-attention.

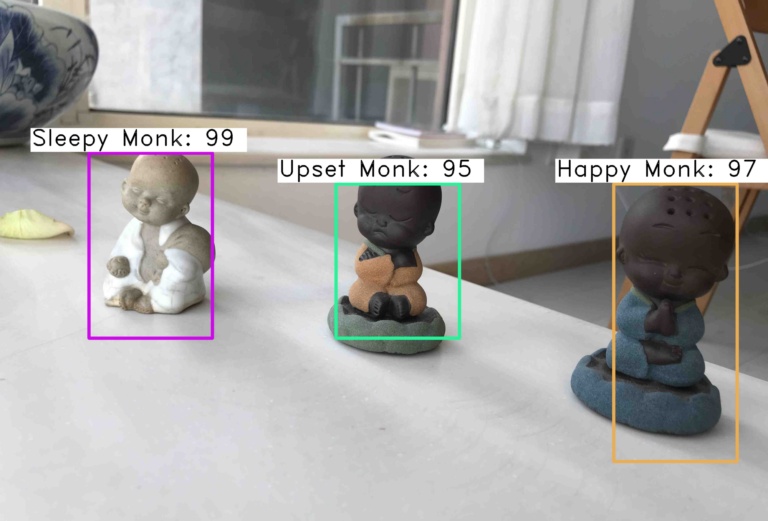

Selective attentional mechanism tries to ignore irrelevant objects in a scene and spatially-aware attention mechanisms have been used to augment CNN architectures to provide contextual information for improving object detection.

But can attention models serve as the primary primitive of vision models instead of acting as an augmentation to convolution?

Going Beyond Convolutions

In the paper titled Stand-Alone Self-Attention in Vision Models, the authors try to exploit attention models more than as an augmentation to CNNs. They describe a stand-alone self-attention layer that can be used to replace spatial convolutions and build a fully attentional model.

The initial layers of a CNN, sometimes referred to as the stem, plays a critical role in learning local features such as edges, which the later layers use to identify global objects.

Due to input images being large, the stem typically differs from the core block and only focuses on lightweight operations with spatial downsampling.

At the stem layer, the content is comprised of RGB pixels that are individually uninformative and heavily spatially correlated. This property makes learning useful features such as edge detectors difficult for content-based mechanisms such as self-attention.

To bridge the gap between convolutions and self-attention while not significantly increasing computation, distance-based information is introduced in the pointwise 1 × 1 convolution (WV) through spatially-varying linear transformations.

To validate their objectives, the researchers conducted experiments with ImageNet classification task, which contains 1.28 million training images and 50,000 test images.

For width scaling, the base width is linearly multiplied by a given factor across all layers. For depth scaling, a given number of layers are removed from each layer group.

The results show that the attention models outperform the baseline across all depths while having 12% fewer FLOPS and 29% fewer parameters.

For object detection, the convolution stem performs better when the detection heads and FPN are also convolutional, but performs similarly when the entire rest of the network is fully attentional. These results suggest that convolutions consistently perform well when used in the stem.

When attention is used in the early groups and convolutions are used in the later groups, the performance degrades despite a large increase in the parameter count. This suggests that convolutions may better capture low-level features while stand-alone attention layers may better integrate global information.

In this work, the authors have found out that:

- In developing and testing a pure self-attention vision model, self-attention can indeed be an effective stand-alone layer

- Self-attention is especially impactful when used in later layers

- Stand-alone self-attention is an important addition to the vision practitioner’s toolbox

Know more about this model here