It isn’t enough for virtual assistants to offer a rote response to your voice or text. For AI-based systems to become truly useful in our daily lives, they’ll need to achieve what’s currently impossible — the complete comprehension of human language.

With this goal in mind, researchers Douwe Keila, Jason Weston, Harm de Vries, Kurt Shuster, Dhruv Batra and Devi Parikh, at Facebook’s AI research lab (FAIR) are teaching the artificial intelligence systems to understand language by getting them to ‘guide’ virtual tourists around New York City. They have developed a new research task, called Talk The Walk, which explores this embodied AI approach while introducing a degree of realism not previously found in this area.

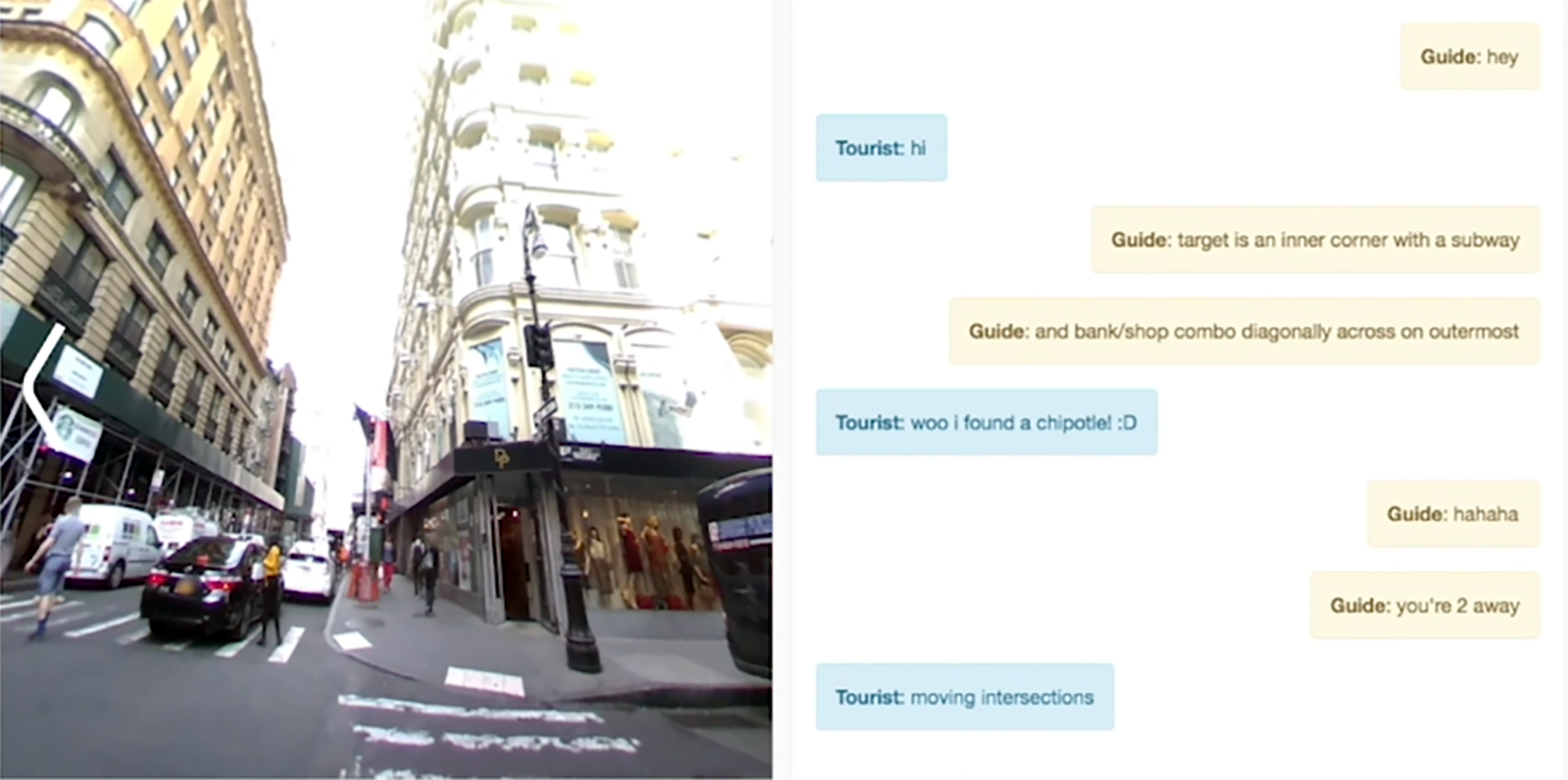

According to a paper published by FAIR, the process involves placing a “tourist bot” onto a random street corner of in New York and getting a “guide bot” to direct them to a spot on a 2D map.

The process goes something like this:

- A pair of AI agents has to communicate with each other to accomplish the shared goal of navigating to a specific location.

- The goal of the task is for the tourist bot to navigate its way through 360-degree images of actual New York City neighbourhoods

- This is done with the help of the guide bot who sees nothing but a map of the neighbourhood

- Using a novel attention mechanism called MASC (Masked Attention for Spatial Convolution), the researchers helped the guide bot focus on the right place on the map

- This, in turn, produced results that were more than twice as accurate on the test set

However, Keila and Weston added that Talk The Walk isn’t meant to be a competition between natural language and synthetic interactions. In fact, it is meant to be an attempt to offer clarity and quantifiable results related to the ultimate goal of creating machines that can effectively “talk” to humans as well as to each another.