Apple premiered its vision of the future today at Cupertino, California. The company unveiled three new smartphones: the iPhone 11, the iPhone 11 Pro and the iPhone 11 Pro Max.

As expected, Apple has laid more emphasis on how they are working towards making machine learning for them while tweaking hardware to offer the world’s best machine learning platforms on mobiles. From image processing for high-quality photos to introducing chips for better performance, this latest event has few but quite significant news for the machine learning community.

The speakers even went ahead and announced that Apple has the best machine learning platform in any smartphone.

Let’s take a look at a few interesting machine learning related news from Steve Jobs theatre:

High Resolution With Deep Fusion

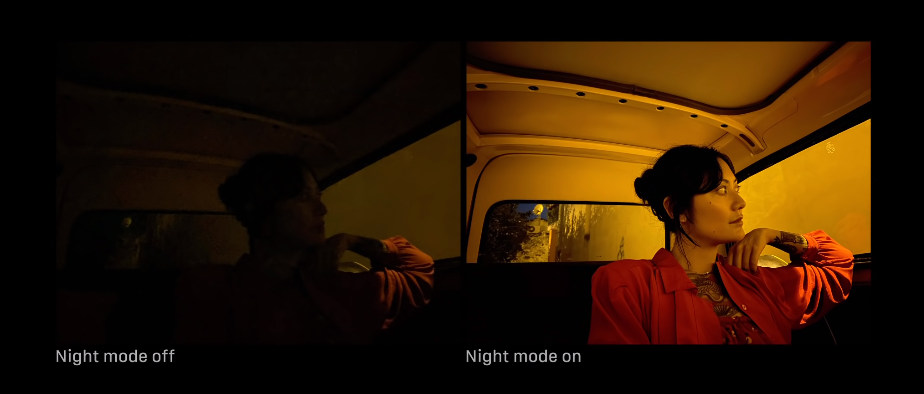

The new iPhone 11 Pro features a triple-camera setup on the back, which includes a telephoto lens, a wide angle lens, and a super wide-angle lens. The new super wide-angle lens is shown in the Camera app as a 0.5x button. The ultra-wide has an f/2.4 aperture with a 120-degree field of view.

Apple says it’s using that machine learning in the iPhone 11’s cameras to help process their images, and says the chip’s speed allows it to shoot 4K video at 60 fps with HDR enabled.

To do this, Apple is using a technology called ‘Deep Fusion’ that combines images from all three lenses and running a neural network in the background to stitch pictures pixel-wise to maintain consistency without sacrificing quality.

QuickTake is a new video recording feature that makes it easier to take videos by long-pressing on the camera shutter button. Video is 4K quality at 60 fps, as well as slo-mo, time-lapse, and expanded dynamic range.

The front-facing iPhone 11 camera has been updated to 12 MP with wide-angle selfie support in the landscape. You can also take 4K video at 60 fps, as well as slow-mo videos.

If a device allows one to shoot two 4K videos at a time while giving 5 hours of extra battery life, that’s a significant upgrade.

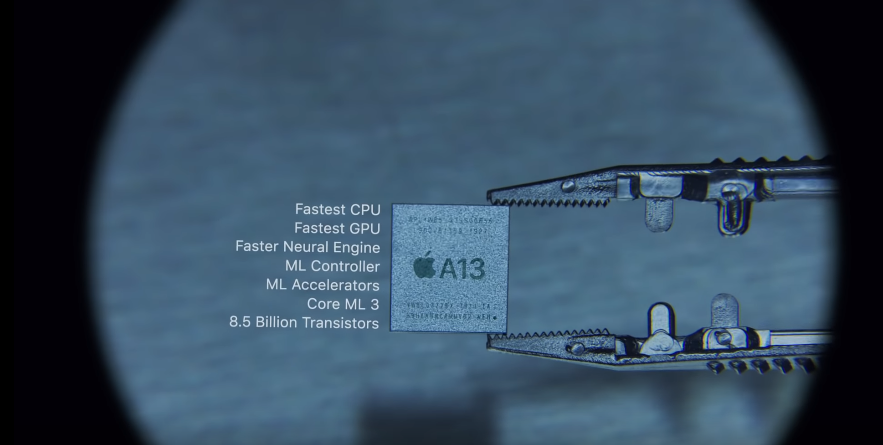

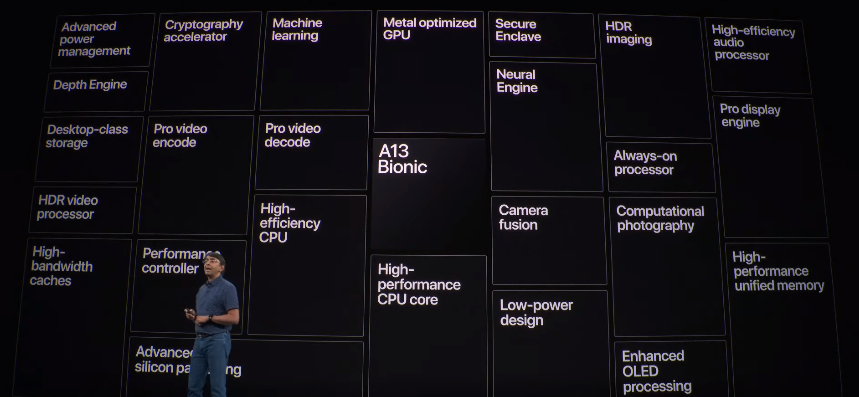

High-Performance A13 Bionic Chip

The iPhone 11 is powered by Apple’s new A13 Bionic chip, which Apple touts as its faster processor ever. As for battery life, the iPhone 11 packs a one-hour-longer battery life than the iPhone XS.

“We use machine learning throughout the iPhone,” said Sri Santhanam explaining how the machine learning and low-power design of A13 Bionic make it the fastest chip ever in a smartphone.

The CPU, GPU and neural engines are all optimised for operating different machine learning workloads and A13 bionic makes ML operations even faster.

Here’s what A13 has to offer for the ML community:

- Carry out one of the most common machine learning tasks (matrix multiplication) 6 times faster.

- Overall, the CPU alone has a capacity of 1 trillion operations per second.

- It comes with a Machine Learning Controller, which allows ML models to be scheduled across the CPU, GPU and the Neural Engine,

- It can be used for on-device natural language processing, image classification in photos and videos, character animation in AR apps, and a ton more.

The 6 times faster multiplication is due to the presence of machine learning accelerators customised for the same. Multiplication operation is a big deal when it comes to deep learning as what goes behind any neural network operation can be watered down to a bunch of addition and multiplication operations. This, in turn, gets translated into 1 trillion operations per second.

So, the machine learning controller accommodates to automate the models seamlessly, scheduled on the CPU, GPU and the neural engine.

“The A13 Bionic is the fastest CPU ever in a smartphone,” Apple said onstage, adding that it also has “the fastest GPU in a smartphone,” too.

World’s Best ML Platform On Phones?

The A13 also features an Apple-designed 64-bit ARMv8.3-A six-core CPU, with two high-performance cores running at 2.65 GHz called Lightning and four energy-efficient cores called Thunder.

The 2 high-performance cores are 20% faster with 30% reduction in power consumption, the 4 high-efficiency cores are 20% faster with a 40% reduction in power consumption.

That efficiency boost is despite fitting a record 8.5 billion transistors inside and upping performance by roughly 20% across the board.

Earlier this year, at the WWDC event, Apple has announced some fine machine learning updates and demonstrated how the developers can benefit from the customisation.

Back then, Apple released Core ML 3 and other exciting tool upgrades for the machine learning community.

Core ML forms the foundation for domain-specific frameworks and functionality. Core MLsupports Vision for image analysis, NLP. This framework can be used with Create ML to train and deploy custom NLP models.

Whereas, With CreateML one can build models for object detection, activity and sound classification, and providing recommendations. Take advantage of word embeddings and transfer learning for text classification.

With over 100 model layers now supported with Core ML, the ML team at Apple believes that apps can now use state-of-the-art models to deliver experiences that deeply understand vision, natural language and speech like never before.

The availability of high-end frameworks like the ones discussed above backed up A13 chips, indeed makes a strong argument for Apple’s claim to the throne. This certainly is a clear show of Apple’s aim at building a vertical tech stack to cater to a larger community.

Apple has been very obvious about their interest in building a next-generation machine learning platform. The enhancement of their hardware services combined with state-of-the-art software options has put Apple at the frontiers of machine learning advancement.