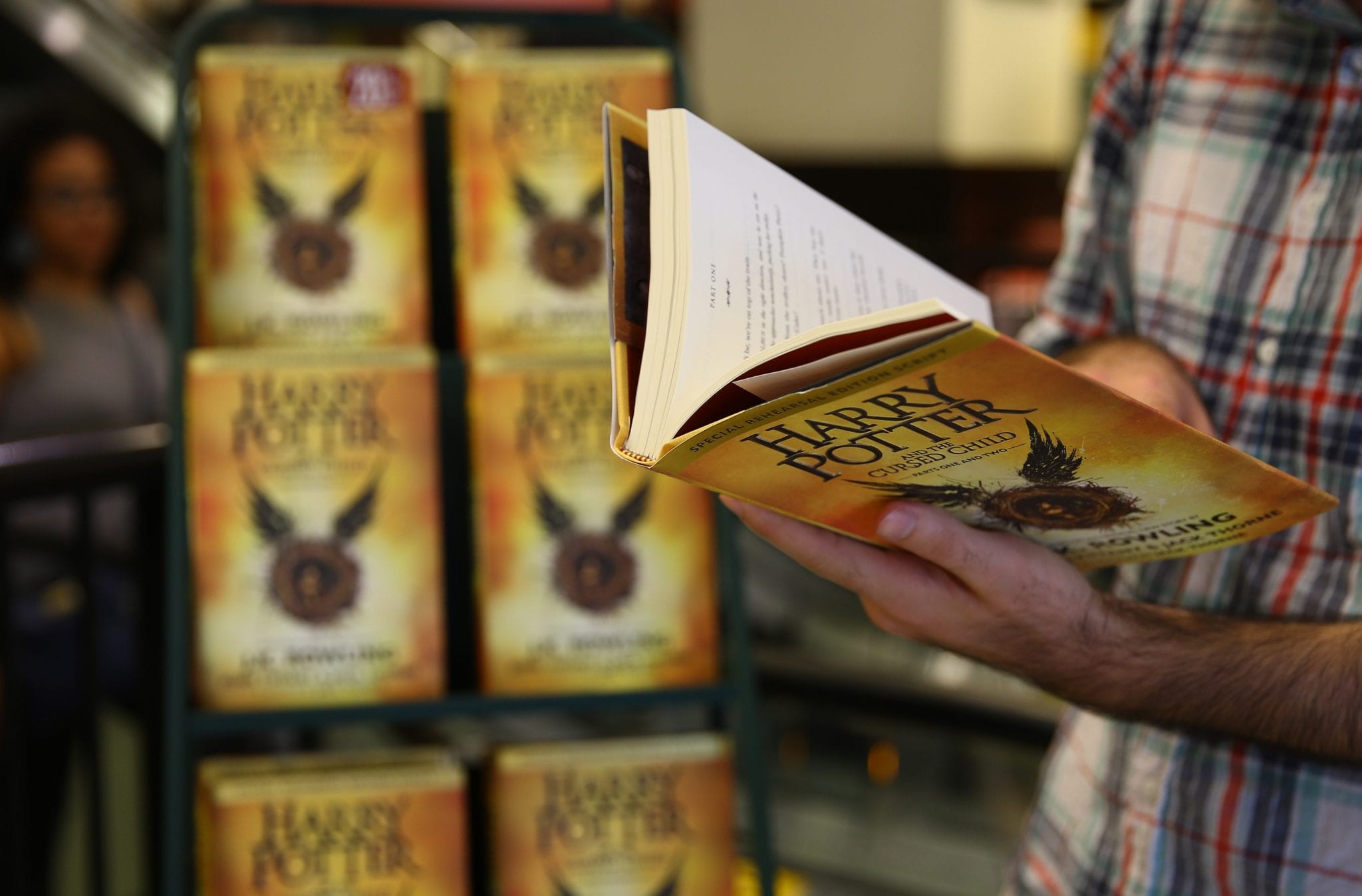

As Harry Potter turns 20, JK Rowling’s runaway success published by Bloomsbury gave a new lease of life to adult fan fiction. Two decades later, publishers are leveraging big data to uncover characters that could potentially be the next bestseller.

J.K. Rowling’s Harry Potter books caused worldwide Potter mania with 450 million copies sold across the world in 79 languages. The question is can big data replicate the success of Rowling’s fantasy fiction?

That’s where computer algorithms and big data analytics steps in. Today, several researchers and professionals are extensively working on how they can help publishing houses predict the success rate of a book before it’s released.

Can Big Data find a bestseller?

An ex-research lead on literature at Apple, Jodie Archer, and an associate professor of English at the University of Nebraska-Lincoln, Mathew L. Jockers is co-publishing a scholarly book, titled “The Bestseller Code: Anatomy of the Blockbuster Novel.” The subject of the book revolves around “bestseller-ometer,” an algorithm that claims a track record of “predicting” New York Times best sellers when applied retrospectively to novels from the past 30 years.

Around 2008, Jockers worked as a lecturer at the Palo Alto campus. His work revolved around the application of computer-enabled quantitative analysis to text. Archer on the other hand, was doubtful about computers’ ability to be substantive about literature.

Together they demonstrated a computer model’s prowess in picking the genre of Shakespeare’s plays based on textual markers. The model helped them find that the book’s primary subject was “human closeness.”

Archer and Jockers realized that narrative “cohesion” was a common trait among top-selling authors. This was done by cataloging words associated with certain subjects. Furthermore, Danielle Steel devotes one-third of her novels to a signature topic – “domestic life,” while John Grisham writes mostly about “lawyers and law.”

Archer and Jockers made other interesting observations as well, for instance, sex doesn’t sell, or at least it’s confined to a vanishingly small proportion of best-selling material. They run their model on the book Fifty Shades of Grey, from which they drew the conclusion that the chief subject of the book was “human closeness.”

Other algorithm-based initiatives in the publishing arena

Archer and Jockers were not the first in the arena to apply the power of Big Data to literature. A Berlin-based startup Inkitt is venturing into a similar domain with what’s been known as the “first novel selected by an algorithm.” Essentially, the program tracks reader responses to stories posted to its web platform to identify potential best sellers.

London’s Jellybooks, another firm, founded in 2011, employs a similar approach. The algorithm can measure “reader engagement” later in the literary production cycle, right before the books are published. This is achieved using a software downloaded by readers onto their devices in exchange for advance access to a title.

The algorithms aren’t magic. Matthew Wilkens, Assistant Professor, English, University of Notre Dame comments, “They reflect interpretative and analytical choices reading one book closely; you’re looking for certain repetitions, word usage patterns, thematic emphases, and allusions.”

Use of algorithms in the publishing industry

These algorithms furnish instructive data points, to highlight diverse directions in which popular fiction may be taken. The potential application of such tools across at publishing’s “discovery” stage are endless. It not only helps determine best-sellers but also helps towards creating one.

The bestseller-ometer has nominated Dave Eggers’ The Circle as the exemplary best-selling text from the past 30 years. The book was accorded a 100 percent chance of best-selling. The algorithm worked right, as the popular fiction sold 220,000 copies as of June.

The tool combines the processing power of thousands of computers, and to identify the characteristics of best-selling fiction at scale by interrogating a massive body of literature.

Last Words

Archer and Jockers have no notion at the moment to “disrupt” publishing with their tool. In other words, they have no immediate plans to commercialize the algorithm. This will serve as a prototype for the approach’s potential in tackling literary questions. Besides, the algorithm could sharpen publishers’ ability to identify prospective bestsellers at the manuscript stage.

However, Knopf editor Carole Baron is pretty skeptical of the forecasting power of an algorithm, as it is based on already-published works.

Moreover, publishers are rarely inclined towards diverting funds to unknown writers. This is where the bestseller-ometer algorithm can come really handy, in the form of a tool that ease publishers’ concerns about taking a flier on a rookie author. It wouldn’t come much as a surprise if the tool helps authors in creating the next Harry Potter-like success.