Adobe is on a mission to make Sensei the smart assistant — by adding voice recognition capability to its applications and deep learning to a slew of tools. In fact, AI and machine learning was woven into most of the apps, whether it is for photo, video or illustration editing software. And buzzwords such as deep learning, neural networks and machine learning dominated every application. The reason was abundantly clear at the recently concluded, largest Adobe MAX 2017 conference at Las Vegas. Over the last two years, Adobe has significantly ramped up its AI engine — Adobe Sensei, that powers most of its new features.

Shantanu Narayen, Adobe CEO believes artificial intelligence is going to be an amplifier and not some substitute for human creation. “We think artificial intelligence will evolve, to help you fill that need. Machines can see patterns and possibilities that we may not be able to see immediately and our artificial intelligence technology which is Adobe Sensei is going to be different and will harness the collective intelligence of the entire creative community,” emphasized Narayen. He announced the biggest release of products in Creative Cloud since it was first launched it five years ago.

Analytics India Magazine lists down a few of Adobe’s AI tools that harness the power of AI and help people push their creative boundaries

Project SceneStitch: One of the most revolutionary tools, Scene Stitch, powered by Adobe Sensei, is basically used to fill large holes in images by querying a database of images and automatically finding content to fill the hole. Adobe engineer Brian Price who demoed the project demonstrated how the Content Aware Fill tool, enables the designer to highlight a part of a photo which he/she wants to remove and SceneStitch can fill the hole with the scene around it. Powered by AI, Project SceneStich can replace sections of photo and replace it with other relevant photos from the database. The groundbreaking part is that the algorithm compares the image to an entire database of photos and replaces the part with a relevant photo. One of the most impressive image editing techniques, this tool which is still in prototype stage can potentially out-phase the human in the loop. According to Brian Price from Adobe, Content Aware tool allows designers to more than just fill the hole in the image, it is a way of reimagining and remixing content.

Project Cloak: Want to get rid of an unwanted object in your video. Adobe’s Geoff Oxholm shows the way by selecting the spot and allowing Content Aware Fill tool to do the rest. It works brilliantly when there is something freestanding in front of the background and also an object or a shadow, one wants to remove, explained Oxholm. “Cloak enables removing unwanted things from a video by imagining what would appear if these unwanted things were removed,” Oxholm said during the conference. The modus operandi – first the user has to create a mask that selects the object/area in the video one wants to remove, and then the Cloak software will automatically and intelligently replace that area in each frame.

Project Puppetron: Demoed by Jakub Fiser, this groundbreaking projected elicited the most laughs for its hilarious renditions of Pakistani American actor and stand-up comedian Kumail Nanjiani who was also present on-stage at the Adobe Max 2017. Essentially, Puppetron is a way to combine a series of facial photos with artistic portraits to create puppets directly usable in Character Animator. Strikingly similar to what Prisma does, Puppetron’s algorithm can analyze a portrait done in line drawing or some other medium, and apply that same look to a regular portrait photograph. And the final representation is strikingly accurate. It can be seen from the image where modern art sketch stylization is applied to the photo and the remixed result is strikingly accurate.

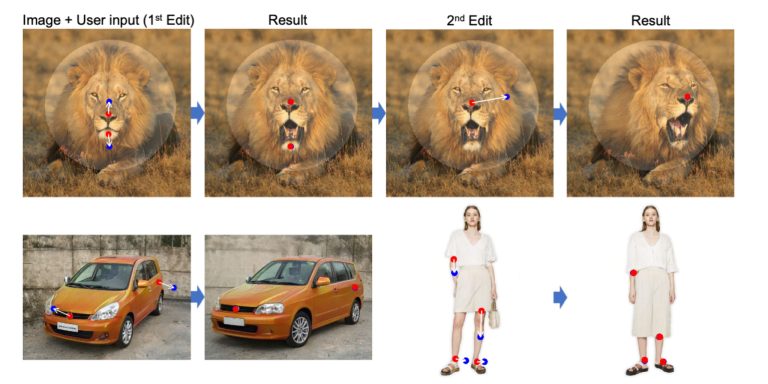

Project DeepFill: So you heard about the magical Content Aware Fill. Well, now Adobe has taken it one step further and developed a new deep neural network-based image in-painting system, powered by Adobe Sensei, that a) learns to generate image patches that are visually realistic and semantically reasonable, and b) allows interactive customization of results based on users’ brushing and sketch inputs. Adobe’s Jiahui Yu demonstrated how DeepFill is an more sophisticated spin-off of Content Aware Fill tool that doesn’t really understand images and relies on copying the surrounding areas or surrounding pixels into the hole. We believe a good image filling system should be able to understand the image. “To bridge this gap and solve the challenging hole filling problem, we introduce Project Deep Fill that leverages the power of Adobe Sensei, Deep Learning and Neural Networks to develop a tool that can understand the image. Can it mask an entire eyebrow? It can successfully hallucinate a new eyebrow for you,” explained Yu, during the conference.

Project Scribbler: Scribbler is one of those tools that will definitely give more power to illustrators, sketch artists, graphic designers and revolutionize the way magazines, agencies and newsrooms work by speeding up the colorizing process. The interactive, deep learning-based image generation system powered by Adobe Sensei, gives users an easy way to express their ideas and visualize their designs by performing simple sketching, and drag-and-drop operations and colorizing the end result. Demonstrated by Jingwan Lu, this tool can not only colorize sketches but also black and white photos. Besides colorizing, Lu demonstrated how one can also control the shape of an object (in this case a handbag) by sketching and the color and the pattern to go with it. You can crop the pattern and paste just to give the neural network some hint what you want the bag to look like, she shared.

Outlook

Adobe’s CEO Narayen has always viewed AI as a game changer, the most disruptive paradigm shift that would drive its revenues and would help the software giant woo more customers. This is the reason behind the soaring popularity of Adobe Sensei that frees users from repetitive tasks and will soon the creative fabric of the design community. Also, check out data visualization tool Project Lincoln and Project Sidewinder which could change the VR landscape.