The late 1960s saw a myriad of social experiments conducted at the premier institutes across the world. The Marshmallow Test was one such experiment conducted by Stanford to assess the relation between future success of a kid and their ability to delay gratification.

However, these fancy social experiments lost steam over time as researchers started to realise how complex human behaviour can be. When counter-arguments ensued that the success of a person is more related to his/her economic background than the will power, pinning down a strategy to incentivise humans became more difficult.

Humans can have an innate desire for choosing shortcuts to success. The ethical side of this strategy is beyond the scope of this article. But what if machines that run on reinforcement learning (RL) algorithms, start to crave for rewards or shortcuts to get those rewards with whatever intelligence they have acquired through training?

This leads to something called ‘reward tampering’ or ‘reward corruption’ in an RL environment.

In other words, the agents in an RL environment may have found a way to obtain a reward without doing the task. This is also called reward hacking.

Why Should We Care About Reward Tampering?

An agent can be called as the unit cell of reinforcement learning. An agent receives rewards from the environment, it is optimised through algorithms to maximise this reward collection and complete the task. For example, when a robotic hand moves a chess piece or does a welding operation on automobiles, it is the agent, which drives the specific motors to move the arm.

RL agents strive to maximise reward. This is great as long as the high reward is found only in states that the user finds desirable. However, in systems employed in the real world, there may be undesired shortcuts to high reward involving the agent tampering with the process that determines agent reward, the reward function.

Reward tampering is any type of agent behaviour that instead changes the objective to match reality. For example, it changes the objective so all labels are considered correct, or all states are considered goal states.

For instance, a self-driving car, a positive reward may be given once it reaches the correct destination and negative rewards for breaking traffic rules and causing accidents.

A reward is non-zero only at the end, where it is either −1, 0, or 1, depending on who won. In any practically implemented system, agent reward may not coincide with user utility.

So, if a machine’s output changes significantly as it craves for rewards, can there be a 21st century equivalent of Marshmallow experiment to test machines?

Fortunately and perhaps surprisingly, there are solutions that remove the agent’s incentive to tamper with the reward function. One way to prevent the agent from tampering with the reward function is to isolate or encrypt the reward function, and in other ways trying to physically prevent the agent from reward tampering.

In an attempt to acknowledge the consequences of reward tampering and provide a solution to the same, researchers at DeepMind released a report discussing various aspects of reinforcement learning algorithms. The authors discuss how causal influence diagrams can give more insights into reward tampering problems.

Solving With Causal Influence Diagrams

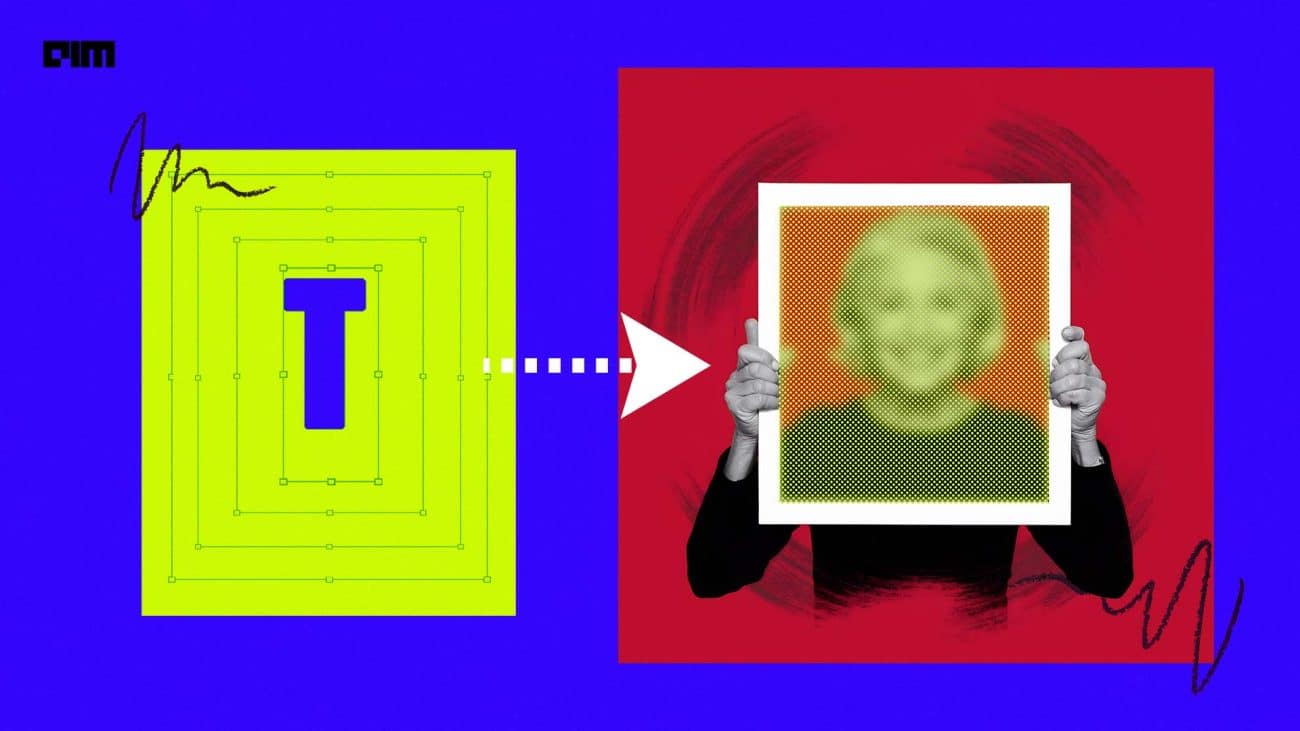

Similar to Bayesian networks and causal graphs, causal influence diagrams as shown above, consist of a directed acyclic graph over a finite set of node square decision nodes representing agent decisions, and diamond utility nodes representing the agent’s optimization objective

An RL environment can be described with a Markov decision process (MDP). It consists of a set of states, a set of rewards, and a set of actions and the goal of the agent is to maximize the sum of the utility nodes.

For all the reward tampering problems and solutions the following were considered by the authors:

- Each reward tampering problem corresponds to a causal path in a suitable causal influence diagram, and

- Each reward tampering solution corresponds to a modification of the diagram that removes the problematic path

Causal influence diagrams reveal both agent and agent incentives. Since causality flows downwards over arrows, a decision can only influence nodes that are downstream of the decision, which means that an agent is only able to influence descendants of its decision nodes.

“There is no reason the current reward function would predict high reward for reward tampering, the learned model may well do that. Indeed, as soon as the agent encounters some tampered-with rewards, a good model will learn to predict high reward for some types of reward tampering,” observed the authors.

When Machines Hate Your Feedback

A problem arises when reward hacking happens. However, the absence of tampering seems to bring its own set of problems too- reward gaming. This problem of reward gaming can occur even if the agent never tampers with the reward function.

One way to mitigate the reward gaming problem is to let the user continuously give feedback to update the reward function, using online reward-modeling.

Whenever the agent finds a strategy with high agent reward but low user utility, the user can give feedback that dissuades the agent from continuing the behaviour. However, a worry with online reward modelling is that the agent may influence the feedback.

For example, the agent may prevent the user from giving feedback while continuing to exploit a misspecified reward function or manipulate the user to give feedback that boosts agent reward but not user utility. Now, this is a feedback tampering problem.

Regardless of whether the reward is chosen by a computer program, a human, or both, a sufficiently capable, real-world agent may find a way to tamper with the decision.

RL algorithms are applied in many robotic processes from pick and place to helping the surgeons. The problem of reward tampering is a typical case of the emergence of evil AI. Fortunately, this has been noticed and efforts are being made to deploy algorithms that don’t get lost in their own strategies.

As with many AI safety problems concerning the behaviour of agents far more capable than today’s agents, the authors believe that an incentive perspective allows us to understand the pros and cons of various agent designs before we have the know-how to build them.

Read the full report here.