My Glass is Half Empty…

Big Data Analytics is riding the wave of a hype cycle. Gartner cycle puts it at much the top of in terms of buzz words. As with any buzz, there will be a lot of articles and strong views on the subject. Some plan to turn everything into a big data problem – the classic case of “If you only have a hammer, you tend to see every problem as a nail.

So, it’s not surprising that Gartner Research claims that more than half of the analytics projects fail. If that’s true, the leaders implementing those projects are exercising as sound judgment as flipping a coin (coming to think of, it is worse than that). What were the symptoms of such failures? Either they are not completed within budget or schedule, or they fail to deliver the overly optimistic features and benefits promised.

Not exactly reassuring.

So far, the biggest challenge seems to be the expectations. A lot of people see Big Data Analytics as a simple three step process:

· Collect all the data you can find (Data Lake, anyone?).

· Throw some high-end hardware and mathematical models at it.

· Wait for an insight to rise to the top.

And then, there are the pessimists. They hate the hype around Big Data and Analytics, because they feel insights are almost divine and artistic in nature (You will like this delightful book if you are one : The War of Art ). They feel analytics is out to replace human intuition with machine logic, and they don’t miss out on thrashing analytics at every chance they get. To them, it’s a buzz which will die soon enough.

In my humble opinion, both are glass half full/empty glass views.

Start with the Why

In his TED speech, Simon Sinek implores us to start with the why in everything we do. It is a brilliant video, and in case you haven’t watched, I highly recommend you do. This is a short version.

But let’s go a little back in time, and see how it applies to analytics.

In 2001, Oren Etzioni was on a flight from Seattle to LA to attend his brother’s wedding. He had bought the ticket months in advance. But during his chitchat with co-passengers, he realized that many of them paid less than him – despite buying tickets much later. An ordinary passenger would have asked a why question here, but probably to the tune of “Why does this happen to me?”. But Etzioni asked a different Why. He asked himself: “Why don’t I collect historical data and use that to anticipate ticket prices?’”

Then again, Etzioni was no ordinary passenger. He was Harvard’s first undergrad to major in Computer Science (1986). He and his student Erik Selberg built MetaCrawler – the first ever web based meta search engine – which was later bought by Infospace. He also had worked as a consultant to Microsoft and Google, among other notable technology companies.

In 2003, Etzioni and colleagues published a paper which could predict the fluctuation of airline ticket prices – and state if the fares would go up or down. For this, they pulled out close to 12,000 airfare records from Seattle to Washington DC and from LA to Boston. They could predict if the ticket price would rise or fall, with 62% accuracy. By 2006, he launched a site – “FareCast” – to the public. By this time, he had access to a massive 175 Billion airfares from around the country and accuracy had improved by leaps and bounds. You cannot access the site now. In April 2008, Microsoft picked up Farecast for a cool $115 Million and integrated it to Bing Travel.

Immersed in all that math and computer science, it’s easy to forget that analytics is also about a mindset. Succeeding in analytics begins by improving our ability to ask the right why questions. And how do we improve that? – I could argue it comes from three key traits:

· Experience

· Intuition

· Curiosity

And that is why firing your Business Analyst team, and replacing them with computers and machine learning is not going to work. There has to be somebody to ask those questions. Sound human intuition is irreplaceable.

What Does the Data tell Me?

Time to switch sides. Let me take the glass half full approach of the data purists, and address the “I only work by gut feeling” cowboys.

Let’s go farther back in time now. In 1990, Orley Ashenfelter, an economist at Princeton University, claimed he can predict wine quality without tasting it. In fact, he put down a formula as well:

Wine Quality = 12.145 + 0.00117 Winter Rainfall + 0.0614 Average Growing Season Temperature – 0.00386 Harvest Rainfall.

Now, it must be said that Ashenfelter was the former editor of American Economic Review and a wine enthusiast. His logic was simple enough: Bordeaux was best when grapes are ripe and their juice is concentrated. Grapes get ripe when summer is hot. Fruits get concentrated when there’s less rainfall during harvest.

NYT published his article in front page. Robert Parker, considered as the most influential wine critic in America at that time called Professor Ashenfelter’s research”ludicrous and absurd.” Britain’s Wine magazine said “the formula’s self-evident silliness invite[s] disrespect“. For good measure, Parker presented a compelling analogy: Ashenfelter was like a movie critic who never goes to see the movie but tells you how good it is based on the actors and the director. I would have agreed with Parker and the critics. It seems extremely counter-intuitive that wine quality can be predicted before they are even bottled, and without the fine art of swishing, sniffing and swirling. In fact, I loved that analogy.

Only, Ashenfelter was proved right.

He had predicted that 1989 Bordeaux – which at that time was barely three months in the casks – would be “the wine of the century”. The 1989 outsold the 1986, which was rated as an “exceptional” wine by Parker. He also predicted that the 1990 would be better. And the 1990 was indeed better. Since then, few wine experts have publicly acknowledged the power of Ashefelter’s equation. Many have adopted it as well.

We humans are used to taking decisions based on experience and intuition. That has served us well for centuries, and it will continue to do so in future. However, it doesn’t preclude the possibility that you may end up wrong when you rely on only intuition. The job of analytics is augmenting decision making. We need to see what the data tells us, and be guided by that insight.

How can I create Value?

All data and insights are not created equal. Not all data gives valuable insights. And not all insights are worth implementing. For this, those insights need to clear multiple rounds of filters:

· Technical feasibility (“Can I implement it in the schedule/budget planned?”).

· Business feasibility ( “Will I get an ROI from this investment?”).

· Legal (“Is it violating any rights?“).

· And more (“Is it ethical?”, for one).

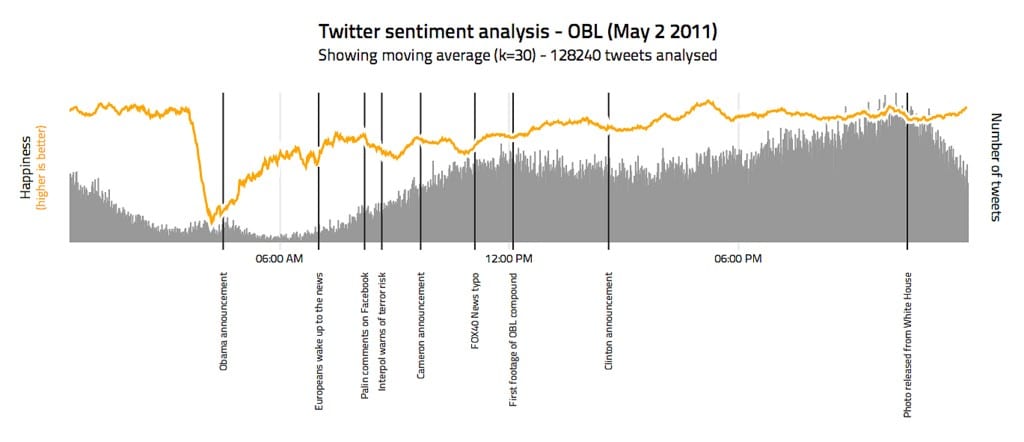

Consider the following Study – “Twitter mood predicts the stock market” – submitted to Cornell. Here’s the summary: Applying the laws of Behavior Economics, the researchers asked if they can predict Dow Jones Industrial Average (DJIA) performance from social sentiments. For this, they used Twitter feeds as data and two mood tracking tools for training. The first one was OpinionFinder. The second one was Google Profile of Mood States (GPOMS), which measures mood in 6 dimensions (Calm, Alert,Sure, Vital, Kind and Happy). The Result? By adding twitter sentiment analysis to existing methods, they claimed an accuracy of 87.6% in catching the daily ups and downs of DJIA.

Of course, the study was widely cited discussed and (also) criticized. That did not stop London based Derwent Capital Markets to launch a new hedge fund- Absolute Return Fund (popularly termed “Twitter Fund”). Result: it was quietly folded in a month. You can read about it here.

Now, there could be many reasons for the failure. A key one looks like most investors thought it was a crazy idea. But the argument remains – The insights did not pass the business viability test. Apparently, that was not the only such fund that folded – a few more had attempted similar hedge funds and failed. (In the interest of full disclosure, let me add that the founder – Paul Hawtin – moved to Cayman Islands re-launched the concept in a different avatar. I am not clear on the performance of the new fund).

Lastly, it’s important to consider the reactions from the stakeholders involved. This includes, but not limited to – public, the Government and competition. If analytics tells you that cutting your price by 10% will get you an additional 15% of market, it’s time to check if that prediction accounts for competitor reaction. Do you expect them to sit idle and wait for market share slip away? What happens if they cut prices as well, leading to a price war and a zero sum game? But what if you are sure that they cannot match your price? Even then, check if they legally have a concern of unfair competition.

When Magic happens

So there you have it. The Why, the What and the How. One is based on Intuition, the second on insights from data and the last one on sound business acumen. Combine them, and you are sure to create something magical.

In Closing…

I am grateful that you cared enough to read this far. I truly enjoyed writing this, and feel it has wide applicability. I sincerely hope you found this article useful. As any good data scientist knows, there will always be errors in judgement, and outliers. I would be happy to hear your views. I invite your comments.